Correlated errors in quantum computers emphasize need for design changes

Quantum computers could outperform classical computers at many tasks, but only if the errors that are an inevitable part of computational tasks are isolated rather than widespread events.

Now, researchers at the University of Wisconsin–Madison have found evidence that errors are correlated across an entire superconducting quantum computing chip — highlighting a problem that must be acknowledged and addressed in the quest for fault-tolerant quantum computers.

The researchers report their findings in a study published June 16 in the journal Nature. Importantly, their work also points to mitigation strategies.

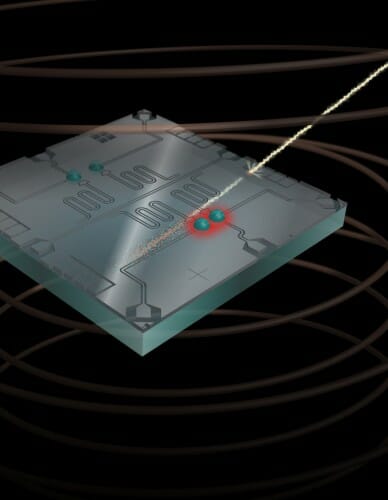

In this artistic rendering, a high-energy cosmic ray hits the qubit chip, freeing up charge in the chip substrate that disrupts the state of neighboring qubits. Image courtesy of Robert McDermott

“I think people have been approaching the problem of error correction in an overly optimistic way, blindly making the assumption that errors are not correlated,” says UW–Madison physics Professor Robert McDermott, senior author of the study. “Our experiments show absolutely that errors are correlated, but as we identify problems and develop a deep physical understanding, we’re going to find ways to work around them.”

The bits in a classical computer can either be a 1 or a 0, but the qubits in a quantum computer can be 1, 0, or an arbitrary mixture — a superposition — of 1 and 0. Classical bits, then, can only make bit flip errors, such as when a 1 flips to 0. Qubits, however, can make two types of error: bit flips or phase flips, where a quantum superposition state changes.

To fix errors, computers must monitor them as they happen. But the laws of quantum physics say that only one error type can be monitored at a time in a single qubit, so a clever error correction protocol called the surface code has been proposed. The surface code involves a large array of connected qubits — some do the computational work, while others are monitored to infer errors in the computational qubits. However, the surface code protocol works reliably only if events that cause errors are isolated, affecting at most a few qubits.

In earlier experiments, McDermott’s group had seen hints that something was causing multiple qubits to flip at the same time. In this new study, they directly asked: are these flips independent, or are they correlated?

Robert McDermott

The research team designed a chip with four qubits made of the superconducting elements niobium and aluminum. The scientists cool the chip to nearly absolute zero, which makes it superconduct and protects it from error-causing interference from the outside environment.

To assess whether qubit flips were correlated, the researchers measured fluctuations in offset charge for all four qubits. The fluctuating offset charge is effectively a change in electric field at the qubit.

The team observed long periods of relative stability followed by sudden jumps in offset charge. The closer two qubits were together, the more likely they were to jump at the same time. These sudden changes were most likely caused by cosmic rays or background radiation in the lab, which both release charged particles. When one of these particles hits the chip, it frees up charges that affect nearby qubits.

This local effect can be easily mitigated with simple design changes. The bigger concern is what could happen next.

Chris Wilen

“If our model about particle impacts is correct, then we would expect that most of the energy is converted into vibrations in the chip that propagate over long distances,” says Chris Wilen, a graduate student and lead author of the study. “As the energy spreads, the disturbance would lead to qubit flips that are correlated across the entire chip.”

In their next set of experiments, that effect is exactly what they saw. They measured charge jumps in one qubit, as in the earlier experiments, then used the timing of these jumps to align measurements of the quantum states of two other qubits. Those two qubits should always be in the computational 1 state. Yet the researchers found that any time they saw a charge jump in the first qubit, the other two — no matter how far away on the chip — quickly flipped from the computational 1 state to the 0 state.

“It’s a long-range effect, and it’s really damaging,” Wilen says. “It’s destroying the quantum information stored in qubits.”

Though this work could be viewed as a setback in the development of superconducting quantum computers, the researchers believe that their results will guide new research toward this problem. Groups at UW–Madison are already working on mitigation strategies.

“As we get closer to the ultimate goal of a fault-tolerant quantum computer, we’re going to identify one new problem after another,” McDermott says. “This is just part of the process of learning more about the system, providing a path to implementation of more resilient designs.”

This study was done in collaboration with Fermilab, Stanford University, INFN Sezione di Roma, Google, Inc., and Lawrence Livermore National Lab. At UW–Madison, support was provided by the U.S. Department of Energy (#DE-SC0020313).